Mom Shocked When Ex Steps Into Current Husband's House

Navigating the complexities of co-parenting after a divorce is no easy feat, but one mom is feeling incredibly grateful for the harmonious dynamic she shares with her ex-husband.

In a touching video that has taken social media by storm, the remarried woman reveals the beautiful relationship between her former spouse and her new family. The video, which has garnered over four million views, showcases a heartwarming scene that highlights the power of mature and respectful co-parenting.

A Shocking Visit

Woman snaps pics of current husband waiting for her ex to show up.

@mrspinchofficial/TikTok

In the video, Mom’s ex-husband arrives to pick up their son for the weekend. Instead of making a quick exit, he steps inside to catch up with the boy's stepdad and spend quality time with his ex-wife’s younger children. The wholesome interaction captured in the video is truly endearing.

A Message of Gratitude

Woman snaps pic of her current husband and her ex husband across from each other.

@mrspinchofficial/TikTok

“When your ex is only supposed to pick up his son… but he comes in to see your husband, and your other children,” the woman wrote over the heartwarming footage.

She added, “I’m so lucky to have two mature men who have spent the last nine years getting along… for his sake. And how nice for my children I’ve had since, to love seeing their big brother’s dad, too.”

Praise From The Community

@mrspinchofficial On my previous post, I can’t believe the amount of men that wouldn’t welcome this!! #mum #mums #moms #mom #parentsoftiktok #motherhood #threeunderthree #momsoftiktokclub #twoundertwo #newbaby #toddlers #mumsoftiktok #newborn #toddlersoftiktok #siblings #5kids #maternityleave #mrspinch #fyp #coparenting

The video has touched many hearts, with commenters applauding the kindness and maturity displayed by all involved.

“That’s two secure men there,” wrote one user. “Love it.”

Another added, “This was so pleasant to watch. After having parents who split and this never would have happened this was such a beautiful sight to see. Well done to you all. Coparenting isn't easy."

A third said, “The right thing to do for the kids.”

"It's So Lovely To Be At Peace"

Woman captions photo of her ex-husband holding her kids.

@mrspinchofficial/TikTok

This video is a testament to the strength and beauty of a supportive co-parenting relationship, showing that with maturity and mutual respect, families can create a loving and nurturing environment for their children, no matter the circumstances. "It's so lovely to be at peace, I can only imagine the energy it must take not to be..." she captioned.

For those who don't have the perfect relationship with their ex, take heart. Every situation is unique, and building a positive co-parenting relationship takes time, effort, and patience.

Remember that progress, no matter how small, is still progress. Focus on creating a loving environment for your children and know that with time, understanding, and communication, even the most challenging relationships can improve. There is always hope for a better tomorrow.

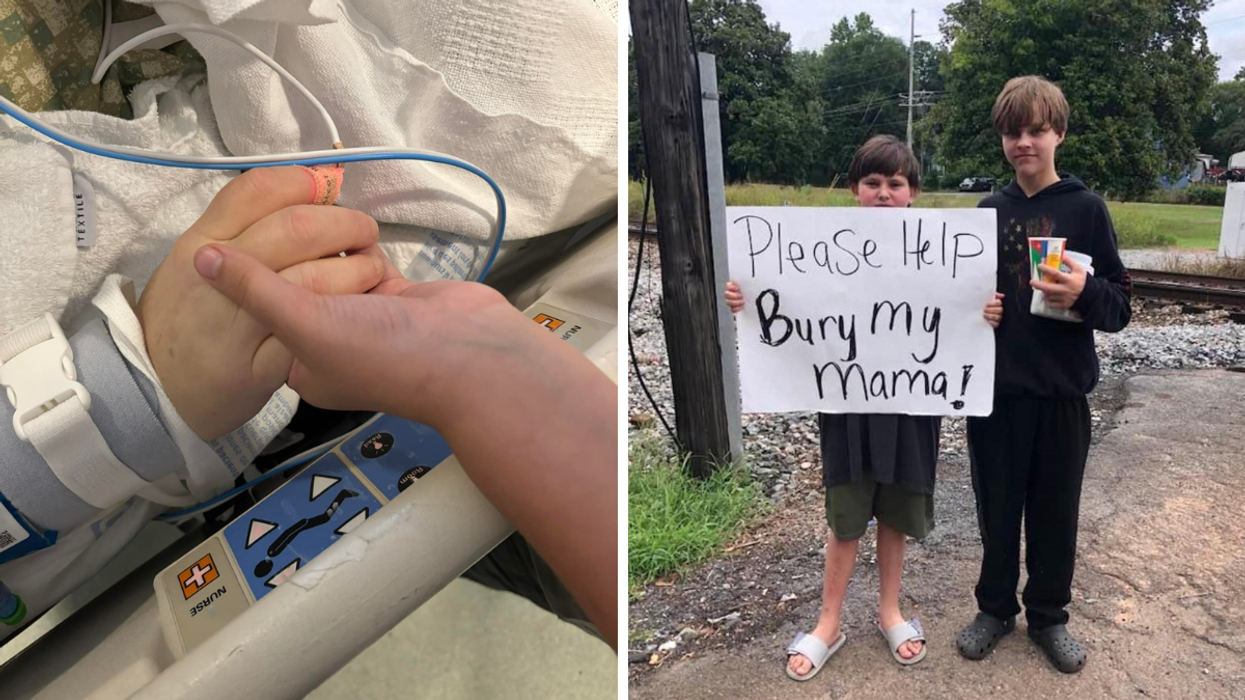

Donate to Help 11 year old Kayden bury his mama, organized by Jennifer Grissom

Donate to Help 11 year old Kayden bury his mama, organized by Jennifer Grissom